Is it AI? DeKalb’s wrong map shows potential pitfalls of government tech use

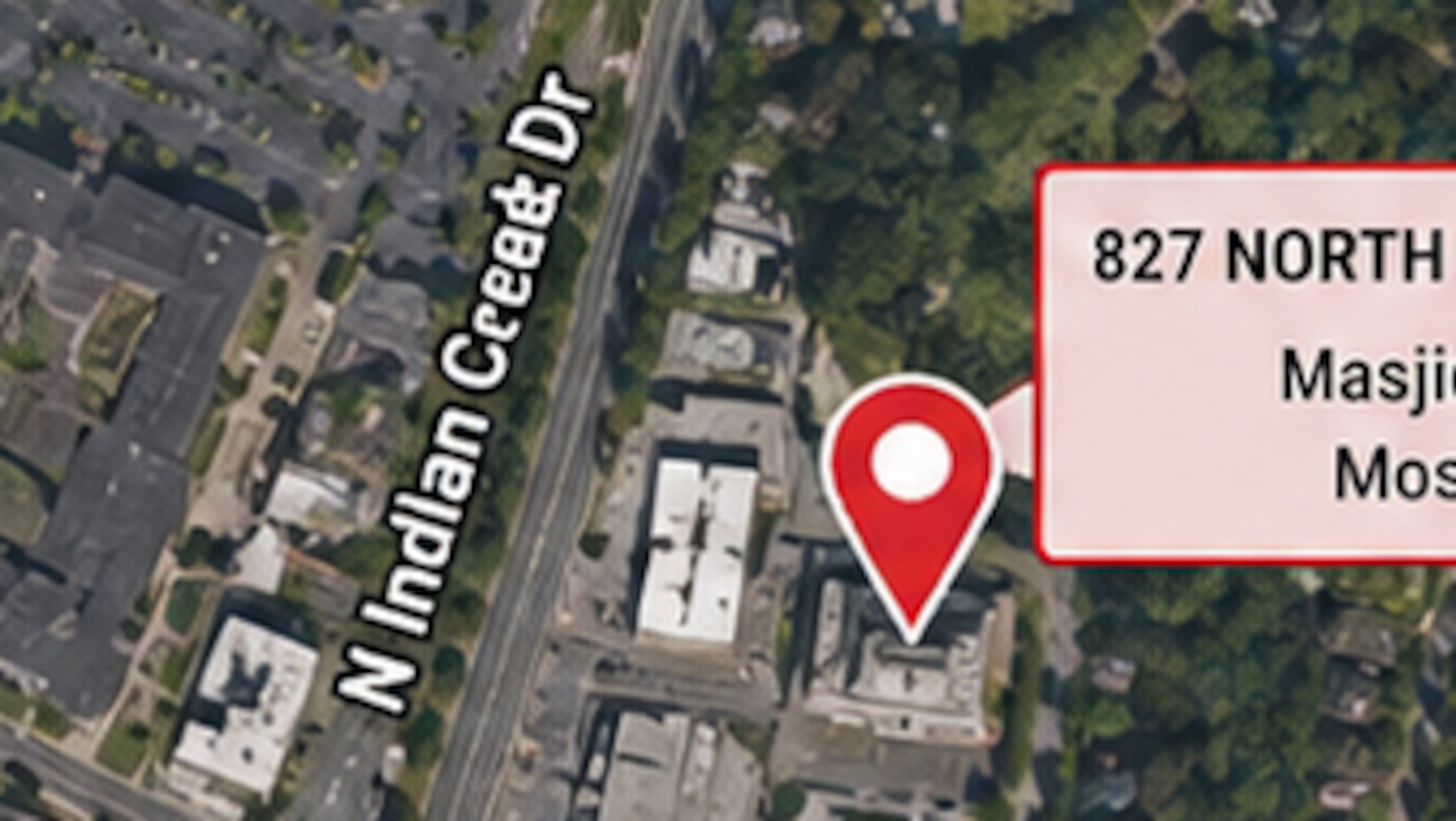

When DeKalb County sent out a satellite map illustrating locations where recent alleged arsons took place, it meant to inform the public with some facts. The problem? The map itself was fiction.

It places roads in incorrect places, and one of the street names includes odd characters that mimic letters. The roads do not correspond with where the roads exist in reality or in reference to each other.

And, two experts who analyzed the map told The Atlanta Journal-Constitution, it may have been created using artificial intelligence.

It’s a scenario that has played out across the U.S. in recent months: government agencies using AI to improve public service but ending up with proverbial egg on their faces when left with AI mistakes.

The National Weather Service published and then retracted a social media graphic informing people of wind gusts in the made-up towns of “Whata Bod” and “Orangeotild,” The Washington Post reported in January. Callers to a state agency in Washington pressed 2 for a Spanish speaker and were instead met with an AI voice speaking English with a Spanish accent, AP News reported in February.

In DeKalb County, interim Fire Chief Melvin Carter said the map was meant to illustrate the general area where the fires were set, not pinpoint exact locations. But he acknowledged the inaccuracies, apologized and said the county will have internal conversations around properly reviewing content before it goes out.

“It’s just a bad map,” he said. “This is a moment where we could have been a little more accurate or at least been a little bit better with the communication.”

He said he doesn’t know exactly how the map was created but thinks someone pulled an image off Google for it. The county’s GIS department was not involved, he added. The GIS team created a new map that went out to news outlets Wednesday after the AJC asked about the irregularities.

Neither Carter nor Lorraine Cochran-Johnson, DeKalb’s CEO, would confirm whether the map was created using AI. Cochran-Johnson’s office didn’t answer specific questions about the map’s origin, but did refer to Carter’s explanation about it depicting the general area rather than specific locations.

While AI experts say they cannot tell definitively whether the map was AI-generated, they agreed the mistakes align with common markers seen with AI content.

“It’s very difficult to say definitely that this is AI-generated completely,” Pascal Van Hentenryck, a Georgia Tech professor who specializes in AI, said in an interview. “There are a lot of mistakes on this map.”

Van Hentenryck asked Microsoft Copilot, a generative AI chatbot, to make a map showing the fires. It made similar mistakes, with the locations placed in a circle around the campus and with streets in the wrong places. It looks, however, very convincing, he said.

AI-generated content looks and feels like a human made it because AI models are trained on human content, Van Hentenryck said. That’s why he advocates for agencies to disclose when they use the technology, he said.

“When you show a map which is annotated like that, it seems like a human has done it, and you have a tendency to trust it,” he said.

He participated in a Georgia Senate study committee on AI in 2024. Experts and lawmakers determined the state should pass laws requiring local governments to implement policies ensuring safe and ethical use of the technology. That legislation did not pass, but other measures regulating certain facets of AI use made it to the governor’s desk.

Senate Bill 540 requires AI companion chatbots to remind their users that they are interacting with a bot, not a real person, as they are designed to mimic. Senate Bill 444 ensures a human, not solely AI or other software, decides whether a patient’s medical procedure is covered by insurance.

Laws in states across the U.S. and in other countries have created a patchwork of regulations that are not formalized across the board, Van Hentenryck said.

“It’s really, really not unified in any way,” Van Hentenryck said. “We have to make an effort in actually regulating without impeding innovation.”

Local governments in Georgia do not currently face federal or state rules on AI use or disclosure, but the state does have an Office of Artificial Intelligence that provides guidance for state agencies and employees. The state guidelines follow five principles: get approval, use tools properly, be vigilant in virtual meetings, do not risk private data and beware of bias, according to its website.

Arun Rai, a Georgia State University professor and researcher specializing in AI, is on the state’s AI advisory council, which provides oversight on AI in the state. He evaluated DeKalb’s map and confirmed it does have some irregularities that would be consistent with the use of AI.

But whether it was a human error or AI, the outcome is the same, he said.

“Regardless of how we got here, I would say that the same thing failed: We did not have verification before public dissemination, whether it was a generative tool or a human error,” Rai said. “If it enters an official channel without controls or verification, then we risk public trust in important government communication being eroded, and that’s hard to fix.”

Earlier this year, a Clayton County prosecutor was admonished by the Georgia Supreme Court for using AI in a legal brief that referenced fake citations.

In that case, Hannah Payne had requested a new trial, but the judge denied her motion and signed off on the proposed order prepared by the prosecutor that had used AI. The district attorney later apologized to the state high court over the incident.

Rai said DeKalb County’s map raises questions around the use of AI and how government agencies should implement parameters around certain technologies.

“This is public information in a public safety context, so I think it’s an important issue,” Rai said.

Some metro Atlanta news organizations published the county’s faulty map depicting the fire locations.

In situations where public information officers and news reporters alike are moving quickly to disseminate news, striking the right balance between speed and accuracy is an ongoing effort, DeKalb’s fire chief Carter said.

“Our goal is to never sacrifice accuracy. When we fall short, we will acknowledge it, correct it and improve our processes,” he said.

The media often relies on government agencies to provide accurate information designed to inform the public, said Richard Griffiths, spokesperson for the Georgia First Amendment Foundation. When that trust is broken, it can also erode the audience’s trust in the news media, he said.

“Just as we’re seeing AI build momentum in every part of our society, it’s important that the public have trust in the institutions that choose to use AI that they’re using it appropriately and cross-checking it carefully,” Griffiths said.

Disclosures are a key element to maintaining that trust, experts say. Van Hentenryck said technology companies are moving to embed information in AI-generated content that will show consumers exactly where it came from and how it was created. Requiring such disclosure would enable governments to better protect individuals, particularly those who are not well-versed in identifying AI content.

“We will know who has generated that and what tools have been used and what data has been used,” Van Hentenryck said. “This provenance information is something that we can actually require and automate.”

As businesses and governments begin to implement the use of AI, experts agree it’s imperative that they also utilize proper processes to ensure its accuracy and ethical use. Generative AI is a tool that is not going away anytime soon, Van Hentenryck said.

“I think people should use AI. I think they have to understand the limitations, and we have to train people to use it in a trustworthy fashion,” he said.

Some of the ways they can do so, Rai said, include human reviews to verify accuracy, transparent disclosures on what technology was used, and processes or “audit trails” that show every step, ensuring people can look back and see how the content is created.

“I think we are at a point where people have to question, and, at least, ask the question, ‘Could this have been AI-generated or AI-edited?’ I think we’re reaching that point,” Rai said.